Every month a new browser-based generator claims to be the best free AI image tool on the internet. The claim is tempting because the barrier to entry is low: no local setup, no GPU, and no graph-building. But “best” is usually undefined. If you do not specify whether you care about turnaround time, prompt fidelity, export rights, resolution, or style diversity, you are not comparing tools. You are comparing marketing pages. A serious review starts by defining the job the generator must perform.

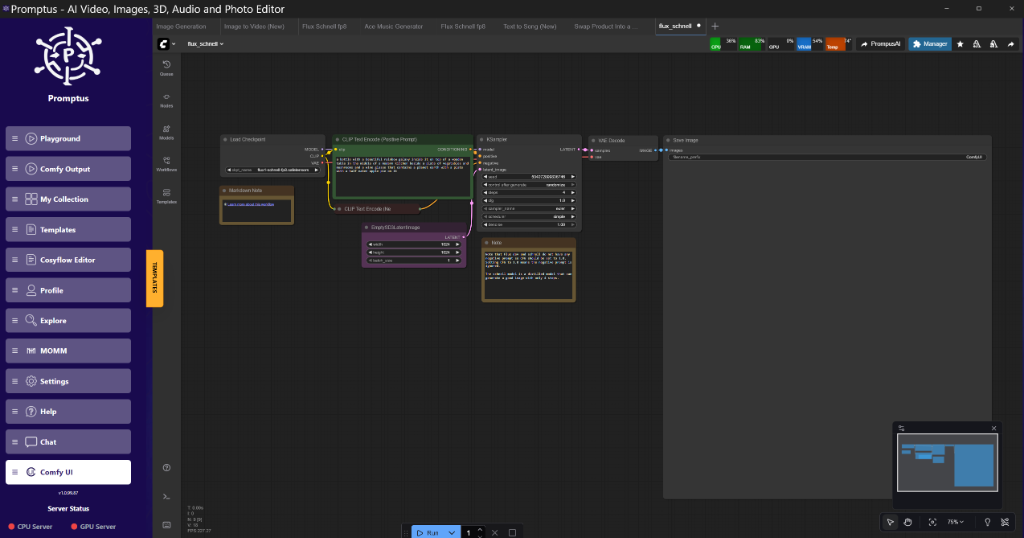

For most readers there are three common jobs. The first is ideation: creating enough visual material to decide whether an idea is worth developing. The second is presentation: producing outputs that are clean enough to share with a client or collaborator without apologising for obvious artifacts. The third is production support: generating drafts that can be refined later in a more controllable stack such as ComfyUI, a managed API workflow, or a design pipeline that includes masks, upscaling, and version control. A free generator can be excellent at one of these jobs and poor at the others.

The framework that actually works

Start with prompt fidelity. Give the service one prompt with clear subject matter, one with layout instructions, and one with style language. If the model keeps replacing your composition with generic “pretty image” behaviour, it is failing the most basic requirement. Then check latency under load. A free tool that is lightning fast at 09:00 and unusable at 18:00 is not reliably free in practice because the cost is paid in waiting. Next, examine the output policy. Resolution caps, download compression, and forced watermarks define how useful the tool really is. Many services look impressive until you save the file and discover that the export is too soft to crop, too small to present, or branded too heavily for professional use.

Style control is another dividing line. Some tools let you steer with meaningful prompt vocabulary, while others flatten everything into the same polished editorial look. The latter can still be fun, but it is not the best generator if you need concept diversity. Finally, read the rights language. A surprising number of free services are generous with throughput while staying vague about ownership, retention, or reuse of prompts. If you are generating anything commercially sensitive, that is not a footnote. It is the product.

How the browser winner usually emerges

The best free generator is usually the one that gets out of your way. It responds quickly, preserves subject identity across retries, exposes enough model behaviour that you can learn from it, and exports a file that still has value outside the browser tab. It does not need to beat a node graph on precision. It needs to be honest, fast enough, and structurally useful. If the service gives you good concept images that can move into another workflow without friction, it is doing its job.

That is why the winner often changes depending on use case. For social thumbnails, speed and contrast may matter more than subtle prompt control. For product mockups, fidelity and clean backgrounds matter more than aggressive stylistic flourish. For research and teaching, transparency matters because you want readers to understand how the tool behaves rather than worship a black box. The “best” badge should therefore be treated as a workflow decision, not a universal truth.

A pragmatic verdict

If you are reviewing free image generators today, do not chase hype language. Build a quick scorecard around fidelity, queue time, output policy, style range, repeatability, and rights. The service that consistently clears those six gates is the one worth keeping in your toolkit. Everything else is either a novelty or a useful but narrow specialist. That framing makes the verdict more durable, because it explains why the tool wins instead of just announcing that it does.